A team of Google Glass developers, Brandyn White and Andrew Miller have recently revealed an augmented reality setup for Glass. The team has included a practical real-world demo and they also released the code into the OpenGlass project. Perhaps more interesting with this setup though, this complies with the current rules surrounding Google’s Mirror API.

That being said, this demo shows how street signs can be translated from one language to another, how you can get information showing how tall the Washington Monument is and even whether a restaurant is open or closed and how many stars it has from other visitors. The part about the compliance with the Mirror API comes in to how this is all being done.

Basically, this setup has the app display an image and then gives those results/answers based off of the image (as opposed to the actual live view). In a non-Glass example, this is sort of along the lines of having using Google Image Search in that you are presenting an image instead of a current live view. Of course, while this method manages to stay within the Mirror API limitations, the hope is that one day things will be shown without the need for the in-between image.

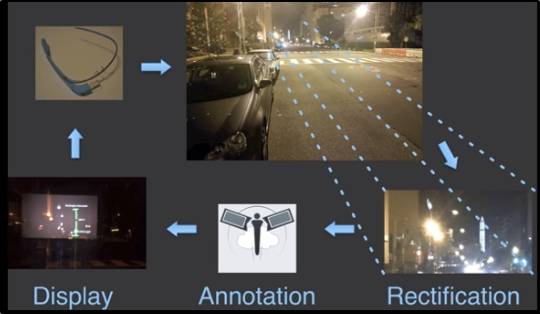

The image shown above (which is a screen capture from the video) shows the process. Things begin by capturing an image with Google Glass. From that point the image view gets rectified, annotated and re-displayed for the Glass user. Except the re-display of the image is showing those details that you had been searching for.

Anyway, in addition to this augmented reality demo using Google Glass, this same team of developers has also a few other items listed on the OpenGlass website. Some of those others include using Glass to have questions answered by way of Twitter, by way of other humans and more.

VIA: SelfScreens