Alexa is getting some new features and enhancements. The last one we showed here was the all-new Auto Mode and Start My Commute. They came after the new communication, safety, entertainment features were introduced in September. In the past few months, Amazon has actually been releasing several improvements like better app controls in preview mode and a redesign on items that people really use. We have already learned about the hands-free Alexa support, commuter and traffic conditions, management of medications, and more.

Recently announced by Amazon is ‘natural turn-taking’. It’s a new feature that uses different cues from acoustic to linguistic to visual. This helps Alexa to interact more naturally. The goal is so that there is no need to repeat any wake word usually used.

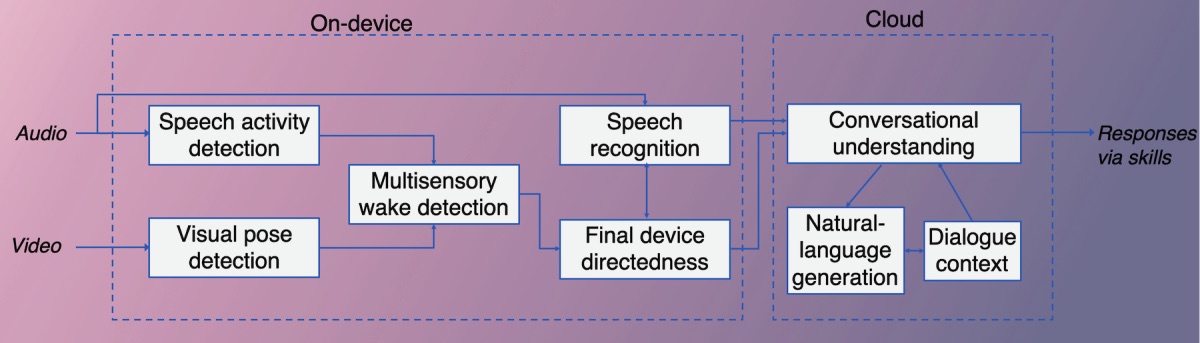

This feature is an advancement in the way Alexa “hears” and “understands”. Its AI will be able to know when users are done speaking. It will also know when speech is directed at an Alexa-powered device and when it is not. It can know naturally when a response should be given.

Alexa already has a Follow-Up Mode but natural turn-taking is a related improvement. Alexa doesn’t just use acoustic cues to determine whether a speech is device- or non-device directed. It also uses other cues like visual information processed with a camera, looking at the position of a person from a device.

All these and more are combined with the computer vision algorithms. These make Alexa smarter than ever. Determining device directedness can prove to be very useful.

As described, this ‘natural turn-taking’ should be able to handle “barge-ins” or customer interruptions of output speech by Alexa. It also knows the difference between the time stamps of speech, looking at the time of interruption and even commencement. Such info is then sent to the Alexa Conversations dialogue manager. The latter will then determine the next response.

The natural turn-taking will soon be in beta-test model. It’s not available yet but Amazon is expected to work on the system very soon.