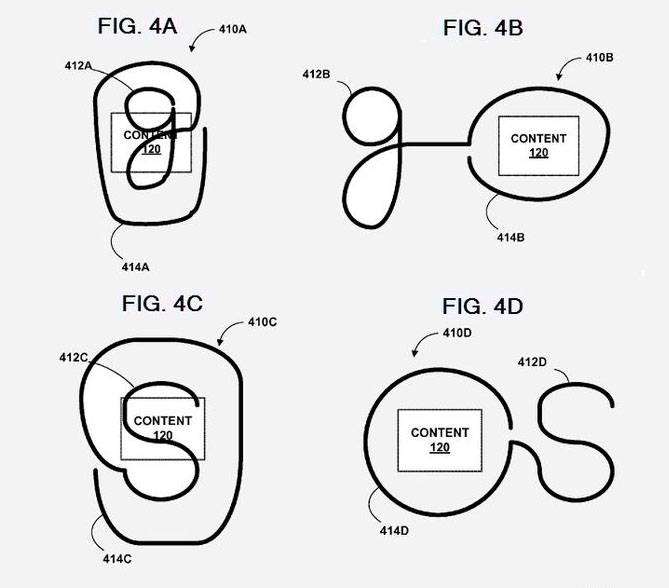

Never one to settle for the status quo, Google seems to be investigating in never-before-seen methods of gesture control for Android or other touch-based systems. A newly published patent from 2011 indicates that software engineers have come up with a unique method of highlighting and searching for various types of content using a system that recalls Palm’s old Graffiti input. Essentially you draw a lowercase “g”, then continue the trail of movement around whatever it is on the screen that you want to search for. This in turn automatically searches the relevant Google service for the text, photo or video in question.

The patents cover a wide range of gestures in a staggering array of different configurations – not unusual, since the nature of the US patent system rewards those who cover all contingencies. But the basics are this: Place your finger on a screen or trackpad, draw a command around content that’s already there, then either automatically search for it or perform another command. The methods of doing so differ and sometimes include multiple pieces of content, but the common thread in the patent is that it’s all achieved in a single motion, without removing your finger or pointing device from the surface.

To be honest ,this seems a little complex for a smartphone – the motions described in Google’s patent just wouldn’t be practical on anything smaller than 5 inches. But a tablet, or perhaps a device docked into a desktop mode? That makes a lot of sense. Google has already investigates the possibilities of gesture-based input in their Gesture Search for Mobile, and the software described here seems like a natural extension of those efforts. It’s unlikely that we’d see the fruition of this labor before a major revision to Android, so keep an eye out for the fruits of this labor at Google IO this summer.

[via Patently Apple – thanks, Jack!]